Charter Growth, Fixed Infrastructure, and the Second Wave of Closures in Texas

A collaboration between Lewis McLain & AI

Charter schools are not new to Texas. They have existed for more than three decades. Many of us have written about them before — including in earlier citybaseblog.net discussions — when they were smaller, experimental, and assumed to be complementary. The original expectation was that charters would remain modest in scale and exert limited fiscal pressure on traditional school districts.

What has changed is not their existence but their speed of growth and their concentration in major metropolitan areas. Charter enrollment is no longer marginal. In some cities, it has crossed thresholds where incremental growth produces structural consequences. The alarm is not ideological. It is mathematical.

Texas now operates two parallel public education systems at meaningful scale. That reality has produced a second wave of school closures across the state, financial strain in districts already optimized once before, and increasing anguish for locally elected school boards who must make decisions that feel like betrayals to the communities they serve.

Not New — But Now at Critical Mass

Charter schools began as alternatives designed to foster innovation and provide parental choice. Early debate assumed charters would remain small relative to the district system. That assumption no longer holds.

In San Antonio, charter enrollment has grown from roughly 3 percent of public school students a decade ago to approximately 13 percent today. The number of charter campuses in the city’s largest districts has nearly doubled since before the pandemic. Tens of thousands of students have shifted systems over time.

This is not drift. It is redistribution at scale.

When charter share moves from low single digits into double digits, the effects are nonlinear. Small changes can be absorbed. Structural shifts cannot.

Enrollment Loss and the Reality of Stranded Costs

Texas funds schools largely on an attendance basis. When a student enrolls in a charter school, state funding follows that student. That mechanism appears neutral: public dollars remain within public education.

But the system was not designed for rapid enrollment fragmentation.

What Actually Declines (Variable Costs)

Some costs fall when enrollment drops:

- Instructional materials

- Certain hourly staffing

- Some food service expenses

- A small portion of utilities

These are real savings. But they represent a minority of total expenditures.

What Does Not Decline (Fixed and Semi-Fixed Costs)

Most district costs are fixed or slow-moving:

Facilities Built for Original Capacity

Campuses were designed for peak enrollment projections. When enrollment falls:

- Gyms remain full size.

- Football stadiums remain full size.

- Auditoriums remain full size.

- Cafeterias remain full size.

- HVAC systems condition entire buildings.

- Roofs must be maintained across full square footage.

- Security systems operate across entire campuses.

A high school built for 2,500 students does not become a 1,800-student cost structure simply because seats are empty.

You cannot operate 60 percent of a stadium.

You cannot heat only part of a hallway.

You cannot shrink a roof.

Utilities do not scale linearly with headcount. Insurance, maintenance, and capital upkeep do not scale linearly with headcount.

Bonded Debt Service

Facilities were financed through voter-approved bonds. Debt service is fixed. Enrollment decline does not reduce bond payments. In fact, debt per pupil increases as enrollment declines.

That affects financial ratios, credit perception, and long-range planning.

Transportation Networks

Bus routes are geographic. Students leaving for charters are not clustered neatly for route elimination. A district may still need to run a bus for 28 students instead of 40.

Transportation cost per student rises even as enrollment falls.

Staffing Thresholds

Operational minimums exist:

- A campus requires a principal.

- A campus requires counseling services.

- A campus requires a nurse.

- A campus requires special education coordination.

You cannot operate at fractional leadership levels. Staffing reductions often require full campus closures rather than marginal trimming.

Extracurricular Infrastructure

Texas districts maintain significant extracurricular infrastructure:

- Stadiums

- Athletic fields

- Band halls

- Fine arts facilities

- Career and technical labs

These programs do not downsize proportionally. They are either maintained or eliminated. And elimination carries cultural consequences.

The Second Wave of Closures

The first wave of school closures in Texas was largely demographic. Neighborhoods aged. Birth rates declined. Suburban migration shifted enrollment patterns.

The second wave is different.

This wave is occurring in areas where population remains substantial, but enrollment has redistributed across systems. More than 45 traditional campuses have closed in the San Antonio metro area since 2014–15. Charter campuses have expanded during the same period.

Districts that already consolidated once now face additional optimization. That is far more destabilizing.

After the first closure cycle:

- The easiest consolidations are already done.

- Community tolerance declines sharply.

- Remaining schools often serve as identity anchors.

The next closure is rarely peripheral. It cuts deeper.

The Anguish of the School Board

This section cannot be treated clinically.

School board members are not corporate executives managing market share. They are locally elected volunteers or modestly compensated public servants who often ran for office because they love children, believe in public education, and want to serve their communities.

When enrollment decline forces discussion of closure, they sit at a dais facing:

- Parents who are frightened.

- Teachers who are grieving.

- Alumni who remember Friday nights under stadium lights.

- Neighborhood residents who see the school as their community’s heart.

The suggestion of closing a child’s school is not heard as fiscal necessity. It is heard as abandonment.

Board members absorb:

- Accusations of incompetence.

- Claims of political bias.

- Personal attacks.

- Public anger that feels brutal and relentless.

Many of these board members send their own children to those schools. Many taught in those buildings. Many worship in those neighborhoods.

Yet the spreadsheet remains unmoved by anguish.

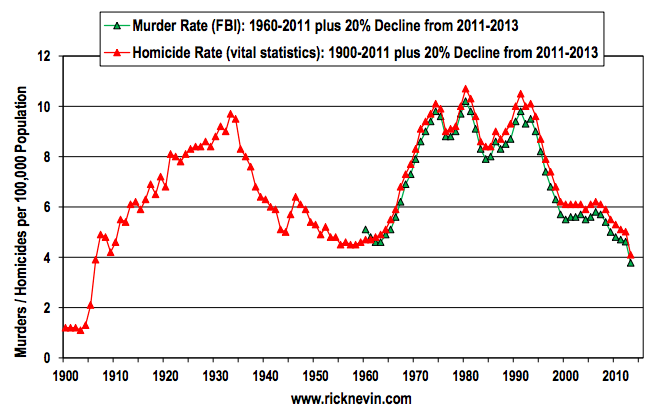

Enrollment charts do not pause because meetings are painful. Bond schedules do not bend because testimony is heartbreaking.

This emotional burden is part of the structural story. The system demands decisions that feel morally injurious even when fiscally unavoidable.

Additional Structural Pressures

Labor Market Fragmentation

Charter expansion creates parallel labor markets. Teacher mobility increases. Recruitment competition intensifies. Salary pressure rises even as district revenue declines.

Marketing Costs

Districts historically relied on geographic assignment. In a competitive landscape, districts must market programs, brand campuses, and actively recruit students — a new layer of expenditure.

Planning Volatility

Ten-year enrollment projections become less reliable. Capital planning becomes more uncertain. Bond timing becomes riskier.

Equity of Infrastructure Burden

Communities have invested heavily in comprehensive district infrastructure. When enrollment fragments, the per-pupil cost of maintaining that infrastructure rises for remaining students.

Governance Differences

Traditional ISDs are governed by elected boards accountable directly to voters. Charter schools are authorized by the state and governed by appointed boards.

Accountability exists in both systems, but its locus differs.

When a district closes a school, the decision is public, political, and deeply personal. When a charter closes, families often return to districts unexpectedly, creating additional planning stress.

The asymmetry matters in governance conversations.

Acknowledging Counterarguments

Charters serve families who seek alternatives. Some demonstrate strong academic outcomes. Some research suggests competitive pressure can improve district performance.

Charters also generally lack access to local bond funding streams, creating facility financing challenges for them.

These arguments are real. They deserve recognition.

But structural fiscal pressure on districts remains real as well. Two truths can coexist:

- Charter schools provide choice.

- Charter growth at scale creates systemic strain for districts built on different assumptions.

The Central Alarm

This is not an argument that charters should not exist.

It is an acknowledgment that Texas public education was not originally structured for rapid enrollment fragmentation across two parallel systems.

When charter enrollment moves from marginal to critical mass, the impact is not incremental. It becomes systemic.

Public school districts are not infinitely elastic.

They are built of:

- Concrete and steel

- Stadiums and auditoriums

- Cafeterias and bus routes

- Bond schedules and staffing thresholds

- Deep community attachment

These do not shrink at the speed of enrollment charts.

And school boards — there for the love of children — are left to make decisions that feel like choosing between arithmetic and heartbreak.

That is the reality now facing districts across Texas.

You must be logged in to post a comment.